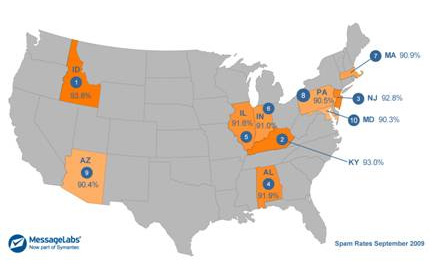

Symantec's MessageLabs revealed a list of the most spammed states in the US today, with some somewhat surprising results. And the award for state that receives the most spam is...Idaho.

According to MessageLabs, Idaho is the spam capital of the US with 93.8% spam, far exceeding the global spam rate for September of 86.4%. MessageLabs says Idaho has jumped 43 spots since 2008, when it was ranked the 44th most spammed state. According to the security firm, the jump can be attributed to "the resilient and aggressive" botnet market, as well as a higher volume of global spam since he beginning of the credit crisis in late 2008.

Here's a look at the top ten:

1. Idaho

2. Kentucky

3. New Jersey

4. Alabama

5. Illinois

6. Indiana

7. Massachusetts

8. Pennsylvania

9. Arizona

10. Maryland.

I have to say that based on the incredible amount of spam I receive in my inbox on a daily basis, I am not entirely surprised that our state of Kentucky is high on the list.

"Some of the high spam levels seen across the US can be attributed to the economic challenges experienced globally since the end of 2008 as well as Internet advancement including the high adoption of social networking," said Paul Wood, MessageLabs Intelligence Senior Analyst, Symantec. "Spammers have taken full advantage of both the economic uncertainty of some and the trustworthiness of others for their own rewards. Automated tools, resilient botnets and targeted spam campaigns are all part of the spammers’ toolkit and they are constantly evolving these techniques to outsmart any effort to stop them. No state is immune to the affects of spam."

MessageLabs says there currently between 4 and 6 million computers across the globe that have been compromised to form botnets, which send the majority of spam. These are used by cybercriminals to send out over 87% of all spam, which equates to about 151 billion emails every day.

So who's not getting very much spam?

States mentioned as having the least amount of spam include Montana, Alaska, Kansas, South Dakota, Tennessee, Vermont, Rhode Island, Wisconsin, and Florida. Puerto Rico gets below the average global spam level.

If you have a lot of followers or friends on social networks, or even just readers of your blog, you are going to get more people sharing your content. The more people sharing your content, the more impressions of your content will be making their way into real time searches.

If you have a lot of followers or friends on social networks, or even just readers of your blog, you are going to get more people sharing your content. The more people sharing your content, the more impressions of your content will be making their way into real time searches.